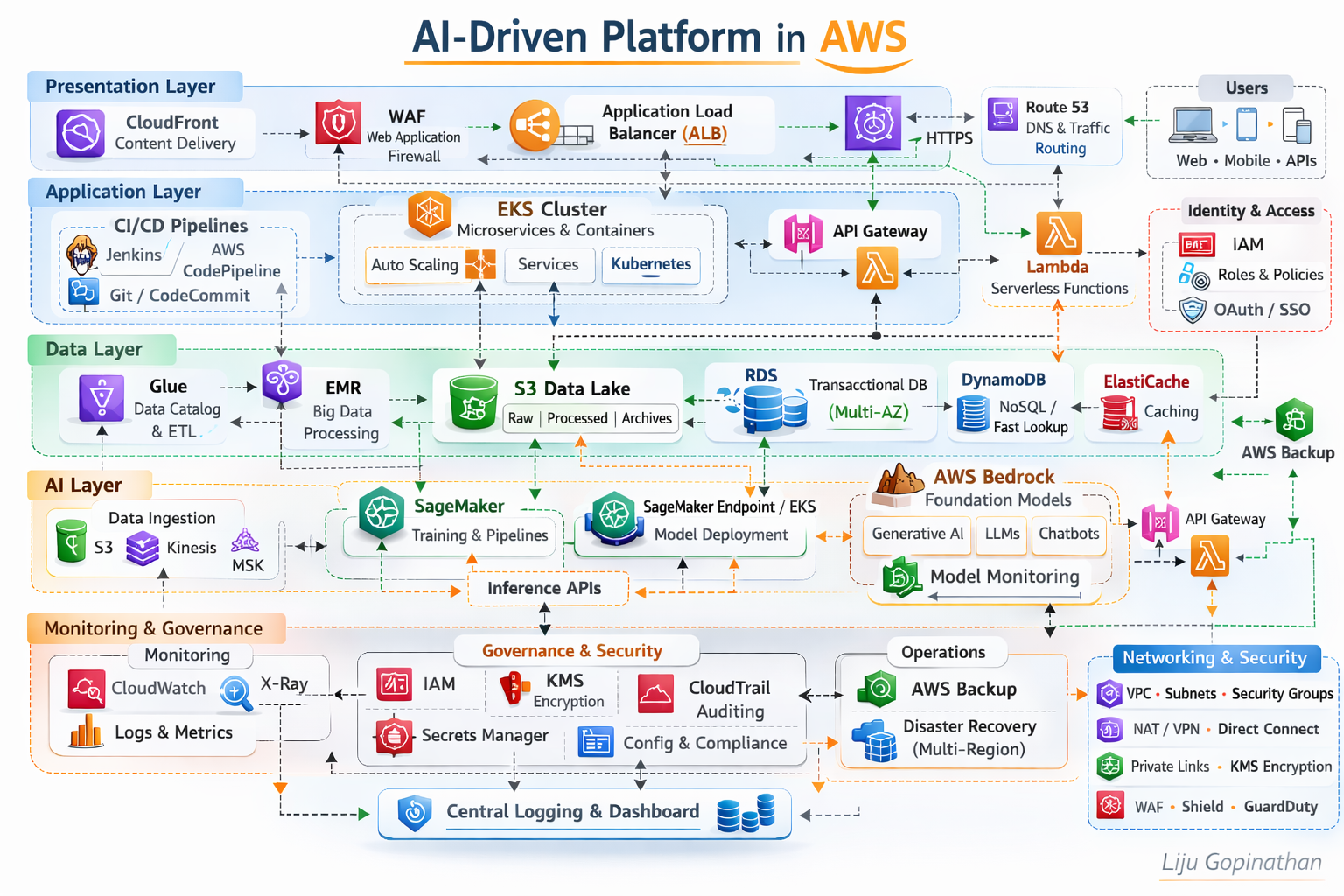

AI-Driven Platform in AWS

A Layered, Secure, Scalable and AI-Ready Cloud Architecture

This architecture represents a modern, enterprise-grade AI-driven platform built natively on AWS. It follows a layered architectural model aligned to AWS best practices, the AWS Well-Architected Framework, and modern cloud-native and MLOps principles.

The platform is structured into five logical layers:

- Presentation Layer

- Application Layer

- Data Layer

- AI/ML Layer

- Monitoring, Governance & Security

Each layer is independently scalable, loosely coupled, and secured by design.

Presentation Layer – Edge Optimised and Secure

The Presentation Layer is responsible for global traffic distribution, edge security, and controlled ingress into the platform.

Key Components:

- Amazon CloudFront

- AWS WAF

- Application Load Balancer (ALB)

- Route 53

Architecture Rationale:

Amazon CloudFront provides low-latency global content delivery and acts as the first entry point for users (Web, Mobile, APIs). It improves performance while reducing origin load.

AWS WAF enforces Layer 7 security policies, protecting against OWASP top 10 vulnerabilities, bot traffic, and malicious payloads.

Application Load Balancer (ALB) routes HTTPS/WebSocket traffic into backend services based on path-based or host-based routing rules.

Route 53 ensures highly available DNS resolution and intelligent traffic routing.

This layer ensures:

- Global scalability

- DDoS mitigation (via Shield integration)

- TLS termination

- Secure API ingress

Application Layer – Cloud-Native Compute & Microservices

The Application Layer is built around containerised and serverless patterns.

Key Components:

- Amazon EKS (Kubernetes)

- AWS Lambda

- Amazon API Gateway

- CI/CD (Jenkins, AWS CodePipeline, Git)

Architecture Rationale:

Amazon EKS orchestrates containerised microservices using Kubernetes. It supports:

- Horizontal Pod Autoscaling

- Service mesh integration (if required)

- Rolling deployments

- Multi-AZ resilience

Microservices are packaged as Docker containers and deployed through automated CI/CD pipelines.

AWS Lambda supports event-driven workloads and lightweight APIs, reducing operational overhead.

Amazon API Gateway exposes REST/HTTP APIs securely, enabling throttling, authentication, and monitoring.

CI/CD pipelines ensure:

- Infrastructure as Code (Terraform/CloudFormation)

- Automated deployments

- DevSecOps integration

- Blue/Green or Canary releases

This layer provides:

- Elastic scaling

- Service isolation

- Zero-downtime deployments

- Microservices-based modularity

Data Layer – Multi-Model Data Platform

The Data Layer supports transactional, analytical, and AI workloads.

Key Components:

- Amazon RDS (Multi-AZ)

- Amazon DynamoDB

- Amazon S3 (Data Lake)

- AWS Glue

- AWS EMR

- ElastiCache

Architecture Rationale:

Amazon RDS (Multi-AZ) provides high availability for relational transactional workloads.

Amazon DynamoDB handles high-throughput, low-latency NoSQL use cases.

Amazon S3 acts as the central data lake:

- Raw data

- Processed data

- Model artifacts

- Logs and archives

AWS Glue manages metadata cataloguing and ETL orchestration.

Amazon EMR supports distributed big data processing (Spark/Hadoop).

ElastiCache improves performance through in-memory caching.

This layer enables:

- Hybrid OLTP + analytical workloads

- Structured and unstructured data support

- AI feature pipelines

- Scalable storage and processing

AI Layer – MLOps & Generative AI Enablement

The AI Layer integrates traditional ML and Generative AI capabilities.

Key Components:

- Amazon SageMaker (Training, Pipelines, Model Registry)

- SageMaker Endpoints / EKS for inference

- AWS Bedrock (Foundation Models)

- Feature Store

- Streaming ingestion (Kinesis/MSK)

Architecture Rationale:

Amazon SageMaker enables:

- Model training

- Hyperparameter tuning

- Managed pipelines

- Model versioning

- Automated MLOps lifecycle

Models are deployed through:

- SageMaker Endpoints (managed inference)

- EKS (customised containerised inference)

AWS Bedrock integrates foundation models such as Claude, Titan, LLaMA, enabling:

- Generative AI applications

- Chatbots

- Document summarisation

- Intelligent automation

The architecture supports:

- Batch inference

- Real-time inference APIs

- Model monitoring

- Responsible AI governance

This layer enables the platform to be:

- AI-first

- GenAI-ready

- MLOps governed

- Scalable for enterprise workloads

Monitoring, Governance & Security – Cross-Layer Controls

Security and observability are embedded across all layers.

Monitoring Components:

- Amazon CloudWatch (Metrics & Logs)

- AWS X-Ray (Tracing)

- AWS CloudTrail (Auditing)

Governance & Security:

- IAM (Role-based access control)

- KMS (Encryption at rest)

- Secrets Manager

- VPC segmentation

- Security Groups

- AWS Backup

- Multi-Region Disaster Recovery

Architecture Principles:

IAM Roles & Policies enforce least privilege access per persona:

- Developer

- Deployer

- Operations

- AI Engineer

KMS ensures encryption of:

- S3

- RDS

- DynamoDB

- Model artifacts

CloudTrail ensures auditability for compliance-heavy industries.

AWS Backup + Multi-Region strategy ensures business continuity.

This governance model aligns with:

- Security pillar of Well-Architected Framework

- Compliance-driven industries

- Enterprise-grade audit requirements

Architectural Characteristics

This platform demonstrates:

- Multi-AZ high availability

- Horizontal scalability

- Microservices architecture

- MLOps lifecycle integration

- Generative AI capability

- Event-driven extensibility

- Secure-by-design networking

- Infrastructure as Code automation

Design Philosophy

It reflects modern enterprise cloud architecture principles where AI is not an add-on but a native capability within the platform. This architecture is intentionally layered to :

- Separate concerns across compute, data and AI

- Enable independent scaling

- Reduce blast radius

- Improve governance

- Accelerate innovation without compromising security